Newsroom

All news

Filters

Category

Categories

Date

Years

Tag

Tags

News

News

News

News

Categories

Years

Tags

OCTG

April, 15th 2026

Geothermal

March, 24th 2026

OCTG

February, 18th 2026

Client

February, 4th 2026

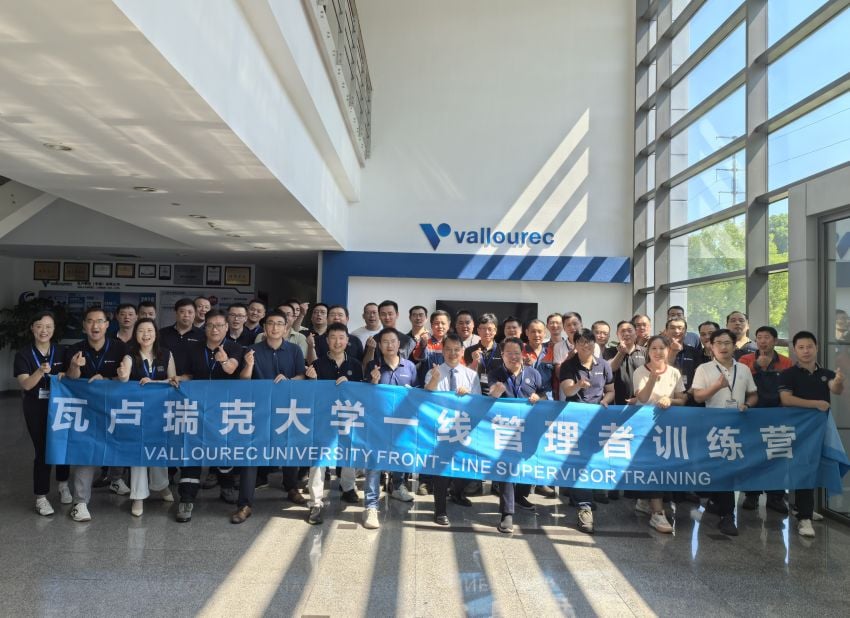

Event

February, 3rd 2026

HR

November, 26th 2025